Biography

I am a Senior Research Engineer at NVIDIA, where I work on Large Language Models (LLMs) evaluation. I hold a PhD in Computer Science from GLADIA at Sapienza University of Rome; my doctoral research focused on building effective, efficient, and reliable LLMs. Previously, I was a Research Scientist at Nous Research and at Apple (MLR team). See Experiences for the full list.

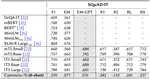

My current research focuses on the evaluation of Large Language Models. In the past, my work has spanned syntax in transformers (KERMIT), efficient decoding (Parallel Jacobi Decoding, which roughly doubles decoding speed and has been adopted by LMSYS), instruction tuning for LLMs (now a standard step in modern training pipelines), and LLM robustness and reliability (pub1, pub2). I have also explored instruction tuning for Italian (Camoscio), privacy in LLMs, audio LLMs, and multimodal neural databases. A full list of publications is available on my Google Scholar profile.

In the news: The BigScience project, to which I contributed, has been covered by outlets such as MIT Technology Review and The Washington Post. I was also featured in La Repubblica as one of the “500 Italians who matter in AI” (article in Italian). More recently, our work on LLM injectivity (aka the Pringle paper) received broad attention with roughly 5 million views!

If you would like to connect, feel free to reach out on X, LinkedIn, or through the contact form below.

- Large Language Models

- Natural Language Processing

- Representation Learning

PhD in Computer Science, 2025

Sapienza University of Rome

MSc in Computer Science, 2020

University of Roma Tor Vergata

BSc in Computer Science, 2018

University of Roma Tor Vergata